You are viewing a plain text version of this content. The canonical link for it is here.

Posted to commits@tvm.apache.org by GitBox <gi...@apache.org> on 2022/05/20 00:21:49 UTC

[GitHub] [tvm] Kevin-XiongC opened a new issue, #11383: [Bug] Numerical inconsistency for roi_align in Relay

Kevin-XiongC opened a new issue, #11383:

URL: https://github.com/apache/tvm/issues/11383

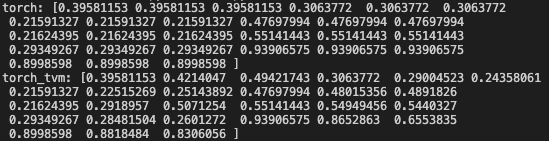

I am converting roi_align from Paddle as frontend. However, I encountered the numerical issue the same as PyTorch's like below

I wonder whether I missed something or this should be a bug in fact.

### Expected behavior

The results of each entry should be numerically close between the compiled model and Pytorch version.

### Actual behavior

Some results's differences are greater than 0.1

### Environment

Ubuntu20.04, tvm 0.9dev, python3.8,llvm 13

### Steps to reproduce

``` python

import warnings

warnings.filterwarnings('ignore')

import os

import unittest

import numpy as np

import tvm

from tvm import relay

from tvm.contrib import graph_executor

from collections import OrderedDict

import sys

import torch

import torchvision

N = 1

C = 3

H = 3

W = 3

num = 1

aligned = True

target = tvm.target.Target("llvm")

np.random.seed(1)

dev = tvm.cpu(0)

data = np.random.rand(1, C, H, W)

a, b = np.random.rand(num, 1), np.random.rand(num, 1)

x_min = np.minimum(a, b)*H

x_max = np.maximum(a, b)*H

a, b = np.random.rand(num, 1), np.random.rand(num, 1)

y_min = np.minimum(a, b)*W

y_max = np.maximum(a, b)*W

boxes = np.concatenate([x_min, y_min, x_max, y_max], axis=1)

x = np.pad(boxes, pad_width=((0, 0), (1, 0)), mode='constant')

m = torchvision.ops.RoIAlign(

output_size=3, spatial_scale=1, sampling_ratio=-1, aligned=aligned)

res_torch = m.forward(

torch.Tensor(data), torch.Tensor(x))

print("torch:", res_torch.numpy().ravel())

scripted_model = torch.jit.trace(m, example_inputs=[torch.Tensor(data), torch.Tensor(

x)]).eval()

torch_mod, torch_params = relay.frontend.from_pytorch(

scripted_model, [('input', data.shape), ('rois', x.shape)])

with tvm.transform.PassContext(opt_level=3):

lib = relay.build(torch_mod, target=target, params=torch_params)

gen_module = graph_executor.GraphModule(lib['default'](dev))

map_inputs = {"input": data, "rois": x}

gen_module.set_input(**map_inputs)

gen_module.run()

res_torch_tvm = gen_module.get_output(0).numpy()

print("torch_tvm:", res_torch_tvm.ravel())

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscribe@tvm.apache.org.apache.org

For queries about this service, please contact Infrastructure at:

users@infra.apache.org