You are viewing a plain text version of this content. The canonical link for it is here.

Posted to commits@kyuubi.apache.org by ch...@apache.org on 2021/12/29 05:37:12 UTC

[incubator-kyuubi] branch master updated: [KYUUBI #1634][FEATURE] Redact secret information from SparkSQLEngine UI

This is an automated email from the ASF dual-hosted git repository.

chengpan pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/incubator-kyuubi.git

The following commit(s) were added to refs/heads/master by this push:

new 7d68aa0 [KYUUBI #1634][FEATURE] Redact secret information from SparkSQLEngine UI

7d68aa0 is described below

commit 7d68aa07ff172c2c8deb65c891cce04370a446ca

Author: minyk <mi...@gmail.com>

AuthorDate: Wed Dec 29 13:37:03 2021 +0800

[KYUUBI #1634][FEATURE] Redact secret information from SparkSQLEngine UI

<!--

Thanks for sending a pull request!

Here are some tips for you:

1. If this is your first time, please read our contributor guidelines: https://kyuubi.readthedocs.io/en/latest/community/contributions.html

2. If the PR is related to an issue in https://github.com/apache/incubator-kyuubi/issues, add '[KYUUBI #XXXX]' in your PR title, e.g., '[KYUUBI #XXXX] Your PR title ...'.

3. If the PR is unfinished, add '[WIP]' in your PR title, e.g., '[WIP][KYUUBI #XXXX] Your PR title ...'.

-->

### _Why are the changes needed?_

<!--

Please clarify why the changes are needed. For instance,

1. If you add a feature, you can talk about the use case of it.

2. If you fix a bug, you can clarify why it is a bug.

-->

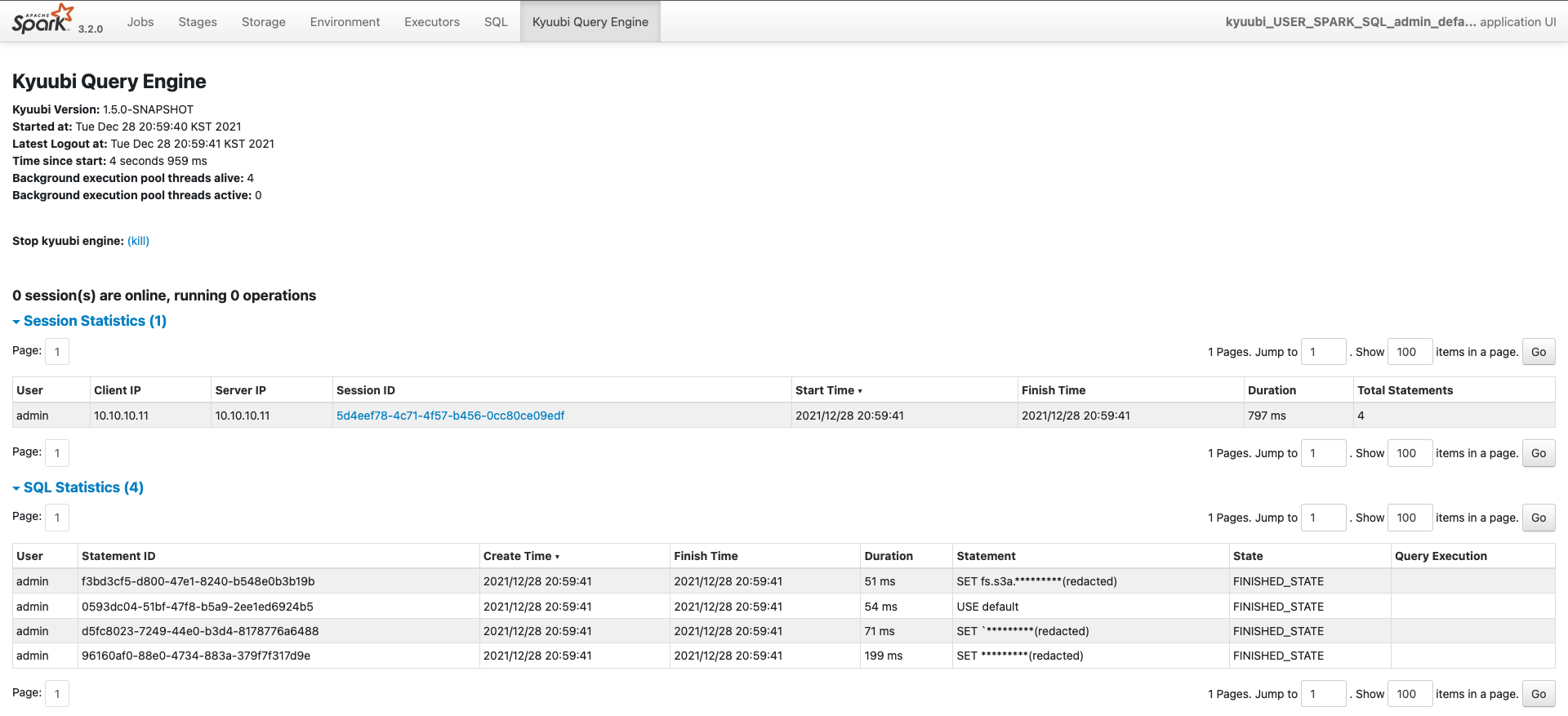

SparkSQLEngine's Kyuubi UI tab shows SQL statements. In some cases, these informations are secret information like S3 access/secret key. Spark SQL UI tab already redact with `spark.redaction.string.regex` configuration. Kyuubi also should redact secret informations with this property.

### _How was this patch tested?_

- [X] Add some test cases that check the changes thoroughly including negative and positive cases if possible

- [X] Add screenshots for manual tests if appropriate

with below property and query:

spark-defaults.conf:

```

spark.redaction.string.regex (?i)url|access.*|secret.*|password

```

query:

```

SET fs.s3a.access.key=testkey

```

- [X] [Run test](https://kyuubi.readthedocs.io/en/latest/develop_tools/testing.html#running-tests) locally before make a pull request

Closes #1640 from minyk/redact-ui.

Closes #1634

4051522a [minyk] set job description with setJobGroup()

3a8e0e91 [minyk] use redactedStatement

c84778e3 [minyk] move to SparkOperation

97a4fb4a [minyk] fix code formatting

79fe52d3 [minyk] redact UI with `spark.redaction.string.regex`

Authored-by: minyk <mi...@gmail.com>

Signed-off-by: Cheng Pan <ch...@apache.org>

---

.../engine/spark/events/SparkOperationEvent.scala | 2 +-

.../engine/spark/operation/SparkOperation.scala | 5 ++-

.../org/apache/spark/kyuubi/SparkUtilsHelper.scala | 38 ++++++++++++++++++++++

.../scala/org/apache/spark/ui/EngineTabSuite.scala | 26 ++++++++++++++-

4 files changed, 68 insertions(+), 3 deletions(-)

diff --git a/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/kyuubi/engine/spark/events/SparkOperationEvent.scala b/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/kyuubi/engine/spark/events/SparkOperationEvent.scala

index 489d901..4b3a598 100644

--- a/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/kyuubi/engine/spark/events/SparkOperationEvent.scala

+++ b/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/kyuubi/engine/spark/events/SparkOperationEvent.scala

@@ -77,7 +77,7 @@ object SparkOperationEvent {

val status = operation.getStatus

new SparkOperationEvent(

operation.statementId,

- operation.statement,

+ operation.redactedStatement,

operation.shouldRunAsync,

status.state.name(),

status.lastModified,

diff --git a/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/kyuubi/engine/spark/operation/SparkOperation.scala b/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/kyuubi/engine/spark/operation/SparkOperation.scala

index f0a6c6f..c440879 100644

--- a/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/kyuubi/engine/spark/operation/SparkOperation.scala

+++ b/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/kyuubi/engine/spark/operation/SparkOperation.scala

@@ -22,6 +22,7 @@ import java.time.ZoneId

import org.apache.commons.lang3.StringUtils

import org.apache.hive.service.rpc.thrift.{TRowSet, TTableSchema}

+import org.apache.spark.kyuubi.SparkUtilsHelper.redact

import org.apache.spark.sql.{DataFrame, Row, SparkSession}

import org.apache.spark.sql.types.StructType

@@ -57,6 +58,8 @@ abstract class SparkOperation(opType: OperationType, session: Session)

protected def resultSchema: StructType

+ def redactedStatement: String = redact(spark.sessionState.conf.stringRedactionPattern, statement)

+

protected def cleanup(targetState: OperationState): Unit = state.synchronized {

if (!isTerminalState(state)) {

setState(targetState)

@@ -97,7 +100,7 @@ abstract class SparkOperation(opType: OperationType, session: Session)

protected def withLocalProperties[T](f: => T): T = {

try {

- spark.sparkContext.setJobGroup(statementId, statement, forceCancel)

+ spark.sparkContext.setJobGroup(statementId, redactedStatement, forceCancel)

spark.sparkContext.setLocalProperty(KYUUBI_SESSION_USER_KEY, session.user)

spark.sparkContext.setLocalProperty(KYUUBI_STATEMENT_ID_KEY, statementId)

schedulerPool match {

diff --git a/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/spark/kyuubi/SparkUtilsHelper.scala b/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/spark/kyuubi/SparkUtilsHelper.scala

new file mode 100644

index 0000000..4af4002

--- /dev/null

+++ b/externals/kyuubi-spark-sql-engine/src/main/scala/org/apache/spark/kyuubi/SparkUtilsHelper.scala

@@ -0,0 +1,38 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.spark.kyuubi

+

+import scala.util.matching.Regex

+

+import org.apache.spark.util.Utils

+

+import org.apache.kyuubi.Logging

+

+/**

+ * A place to invoke non-public APIs of [[Utils]], anything to be added here need to

+ * think twice

+ */

+object SparkUtilsHelper extends Logging {

+

+ /**

+ * Redact the sensitive information in the given string.

+ */

+ def redact(regex: Option[Regex], text: String): String = {

+ Utils.redact(regex, text)

+ }

+}

diff --git a/externals/kyuubi-spark-sql-engine/src/test/scala/org/apache/spark/ui/EngineTabSuite.scala b/externals/kyuubi-spark-sql-engine/src/test/scala/org/apache/spark/ui/EngineTabSuite.scala

index 6b7f091..50ddf0a 100644

--- a/externals/kyuubi-spark-sql-engine/src/test/scala/org/apache/spark/ui/EngineTabSuite.scala

+++ b/externals/kyuubi-spark-sql-engine/src/test/scala/org/apache/spark/ui/EngineTabSuite.scala

@@ -28,7 +28,8 @@ import org.apache.kyuubi.operation.HiveJDBCTestHelper

class EngineTabSuite extends WithSparkSQLEngine with HiveJDBCTestHelper {

override def withKyuubiConf: Map[String, String] = Map(

"spark.ui.enabled" -> "true",

- "spark.ui.port" -> "0")

+ "spark.ui.port" -> "0",

+ "spark.sql.redaction.string.regex" -> "(?i)url|access|secret|password")

override def beforeAll(): Unit = {

SparkContext.getActive.foreach(_.stop())

@@ -136,5 +137,28 @@ class EngineTabSuite extends WithSparkSQLEngine with HiveJDBCTestHelper {

}

}

+ test("statement redact for engine tab") {

+ assert(spark.sparkContext.ui.nonEmpty)

+ val client = HttpClients.createDefault()

+ val req = new HttpGet(spark.sparkContext.uiWebUrl.get + "/kyuubi/")

+ val response = client.execute(req)

+ assert(response.getStatusLine.getStatusCode === 200)

+ val resp = EntityUtils.toString(response.getEntity)

+ assert(resp.contains("0 session(s) are online,"))

+ withJdbcStatement() { statement =>

+ statement.execute(

+ """

+ |SET

+ | fs.s3a.access.key=testkey

+ """.stripMargin)

+ val response = client.execute(req)

+ assert(response.getStatusLine.getStatusCode === 200)

+ val resp = EntityUtils.toString(response.getEntity)

+

+ // check redacted sql

+ assert(resp.contains("redacted"))

+ }

+ }

+

override protected def jdbcUrl: String = getJdbcUrl

}