You are viewing a plain text version of this content. The canonical link for it is here.

Posted to reviews@spark.apache.org by GitBox <gi...@apache.org> on 2019/04/12 11:21:10 UTC

[GitHub] [spark] deshanxiao commented on a change in pull request #24102:

[SPARK-27171][SQL] Support Full-Partitons scan in limit for the first time

deshanxiao commented on a change in pull request #24102: [SPARK-27171][SQL] Support Full-Partitons scan in limit for the first time

URL: https://github.com/apache/spark/pull/24102#discussion_r274864400

##########

File path: sql/core/src/main/scala/org/apache/spark/sql/execution/SparkPlan.scala

##########

@@ -344,7 +344,7 @@ abstract class SparkPlan extends QueryPlan[SparkPlan] with Logging with Serializ

while (buf.size < n && partsScanned < totalParts) {

// The number of partitions to try in this iteration. It is ok for this number to be

// greater than totalParts because we actually cap it at totalParts in runJob.

- var numPartsToTry = 1L

+ var numPartsToTry = Math.max(Math.ceil(sqlContext.conf.limitStartUpFactor * totalParts).toLong, 1L)

Review comment:

@cloud-fan Sorry for so late reply. I try to execute a very simple query:

```

select * from tst where data='20180410' and name like 'xx' limit 1; (400 partitions)

```

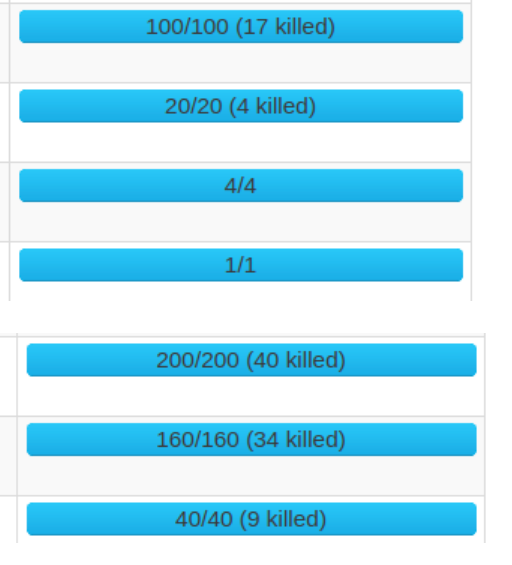

It creates many jobs because "limit" will scan more partitions as the retry increasing (1 -> 4 -> 20 -> 100 -> 200). When I try to add this parameter (0.1). The scan partition from 40 to 200. I think the parameter will increase parallelism to speed up the query. So, different queries may improve performance differently.

----------------------------------------------------------------

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

users@infra.apache.org

With regards,

Apache Git Services

---------------------------------------------------------------------

To unsubscribe, e-mail: reviews-unsubscribe@spark.apache.org

For additional commands, e-mail: reviews-help@spark.apache.org