You are viewing a plain text version of this content. The canonical link for it is here.

Posted to commits@druid.apache.org by GitBox <gi...@apache.org> on 2019/04/20 06:17:30 UTC

[GitHub] [incubator-druid] havannavar opened a new issue #7519: CDI Bean

manager not available : coordinator failed to start in 0.14-incubation

havannavar opened a new issue #7519: CDI Bean manager not available : coordinator failed to start in 0.14-incubation

URL: https://github.com/apache/incubator-druid/issues/7519

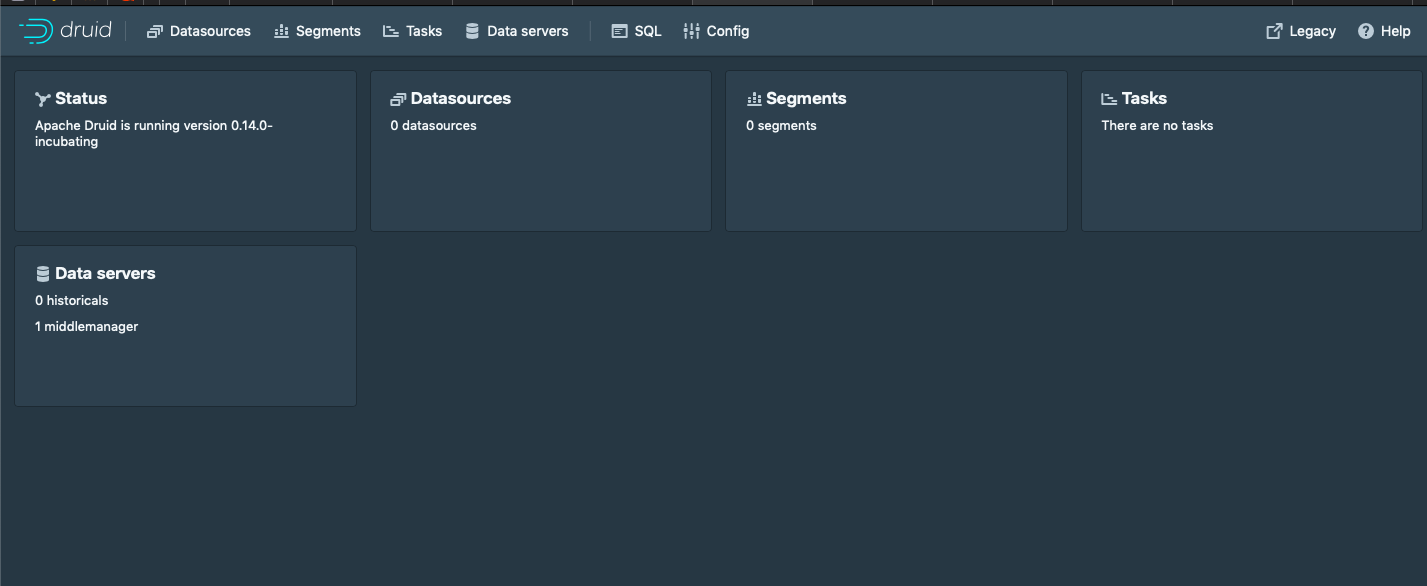

I have downloaded new version **0.14-incubation** and started setting up similar to the 0.13-incubation, on a single cluster machine, but fails with error CDI Bean manager not available .

### Affected Version

0.14.0-incubating

### Description

This is my configuration

```

#

# Extensions

#

druid.extensions.loadList=[ "druid-kafka-indexing-service", "druid-s3-extensions", "postgresql-metadata-storage"]

#

# Logging

#

# Log all runtime properties on startup. Disable to avoid logging properties on startup:

druid.startup.logging.logProperties=true

#

# Hostname

#

druid.host=<ip>

#

# Zookeeper

#

druid.zk.service.host=<zk-host>

druid.zk.paths.base=/druid

#

# Metadata storage

#

# For PostgreSQL (make sure to additionally include the Postgres extension):

druid.metadata.storage.type=postgresql

druid.metadata.storage.connector.connectURI=jdbc:postgresql://my.domain.com:5432/druid

druid.metadata.storage.connector.user=abc

druid.metadata.storage.connector.password=abc

#

# Deep storage

#

# For S3:

druid.storage.type=s3

druid.storage.bucket=events

druid.storage.baseKey=druid/segments

druid.s3.accessKey=asd

druid.s3.secretKey=acbdge

#

# Indexing service logs

#

# For S3:

druid.indexer.logs.type=s3

druid.indexer.logs.s3Bucket=events

druid.indexer.logs.s3Prefix=druid/indexing-logs

#

# Service discovery

#

druid.selectors.indexing.serviceName=druid/overlord

druid.selectors.coordinator.serviceName=druid/coordinator

#

# Monitoring

#

druid.monitoring.monitors=["org.apache.druid.java.util.metrics.JvmMonitor"]

druid.emitter=logging

druid.emitter.logging.logLevel=debug

# Storage type of double columns

# ommiting this will lead to index double as float at the storage layer

druid.indexing.doubleStorage=double

#

# SQL

#

druid.sql.enable=true

```

and the docker file is

```

FROM openjdk:8-jre

MAINTAINER Sateesh H

RUN mkdir -p /opt/

RUN apt-get update && apt-get install -y libtest-www-mechanize-perl

# add (the rest of) our code

COPY . /opt/

RUN mkdir log && \

mkdir -p opt/tmp && \

mkdir -p opt/druid/indexing-logs && \

mkdir -p opt/druid/segments && \

mkdir -p opt/druid/segment-cache && \

mkdir -p opt/druid/task && \

mkdir -p opt/druid/hadoop-tmp && \

mkdir -p opt/druid/pids && \

mkdir -p _config/common && \

mkdir -p _config/specific

EXPOSE 8081

EXPOSE 8082

EXPOSE 8083

EXPOSE 8090

EXPOSE 3306

EXPOSE 2181

EXPOSE 8888

CMD /opt/druid/bin/supervise -c /opt/druid/conf/druid-cluster.conf

```

The above configuration creates 10tables in the postgres database.

Though I can see console

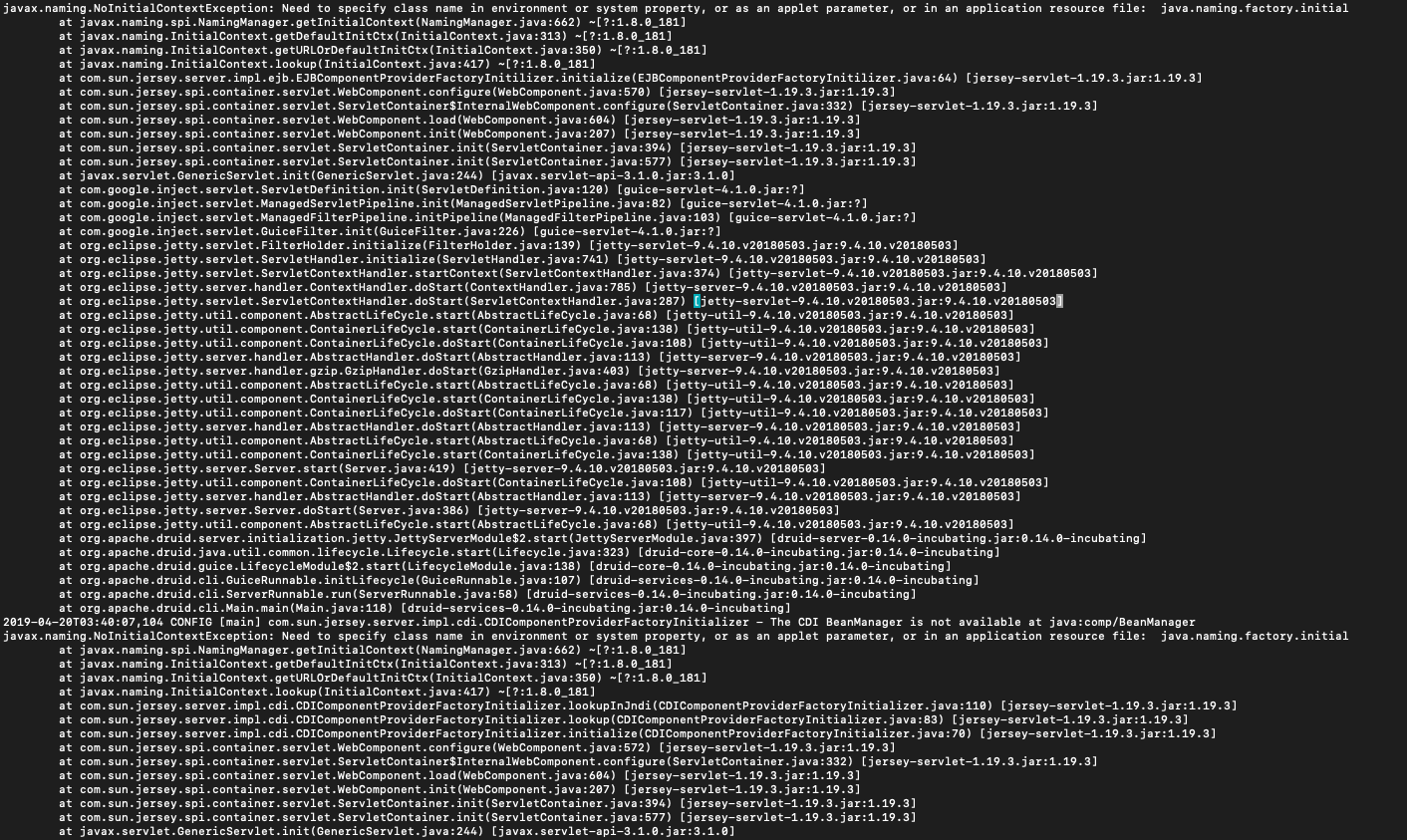

But, able to see following error in coordinator, overlord services.

Also, while creating datasource, it returns the response of

`{"id":"event_datasource"}` but seems to be not created in datasource table and doesn't reflect in the console.

@vogievetsky @jon-wei @gianm @egor-ryashin ; guys can you help me.

Thanks in advance.

----------------------------------------------------------------

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

users@infra.apache.org

With regards,

Apache Git Services

---------------------------------------------------------------------

To unsubscribe, e-mail: commits-unsubscribe@druid.apache.org

For additional commands, e-mail: commits-help@druid.apache.org