You are viewing a plain text version of this content. The canonical link for it is here.

Posted to commits@hudi.apache.org by GitBox <gi...@apache.org> on 2022/10/31 16:11:53 UTC

[GitHub] [hudi] yabha-isomap opened a new issue, #7100: [SUPPORT] Custom HoodieRecordPayload for use in flink sql

yabha-isomap opened a new issue, #7100:

URL: https://github.com/apache/hudi/issues/7100

1. I am trying to use Apache Hudi with Flink sql by following [Hudi's flink guide](https://hudi.apache.org/docs/flink-quick-start-guide)

2. The basics are working, but now I need to provide custom implementation of HoodieRecordPayload as suggested on [this FAQ](https://hudi.apache.org/docs/faq#can-i-implement-my-own-logic-for-how-input-records-are-merged-with-record-on-storage).

3. But when I am passing this config as shown in following listing, it doesn't work. Basically my custom class (MyHudiPoc.Poc) doesn't get picked and instead normal behaviour continues.

```sql

CREATE TABLE t1(

uuid VARCHAR(20) PRIMARY KEY NOT ENFORCED,

name VARCHAR(10),

age INT,

ts TIMESTAMP(3),

`partition` VARCHAR(20)

)

PARTITIONED BY (`partition`)

WITH (

'connector' = 'hudi',

'path' = '/tmp/hudi',

'hoodie.compaction.payload.class' = 'MyHudiPoc.Poc', -- My custom class

'hoodie.datasource.write.payload.class' = 'MyHudiPoc.Poc', -- My custom class

'write.payload.class' = 'MyHudiPoc.Poc', -- My custom class

'table.type' = 'MERGE_ON_READ'

);

INSERT INTO t1 VALUES

('id1','Danny',23,TIMESTAMP '1970-01-01 00:00:01','par1'),

('id2','Stephen',33,TIMESTAMP '1970-01-01 00:00:02','par1'),

('id3','Julian',53,TIMESTAMP '1970-01-01 00:00:03','par2'),

('id4','Fabian',31,TIMESTAMP '1970-01-01 00:00:04','par2'),

('id5','Sophia',18,TIMESTAMP '1970-01-01 00:00:05','par3'),

('id6','Emma',20,TIMESTAMP '1970-01-01 00:00:06','par3'),

('id7','Bob',44,TIMESTAMP '1970-01-01 00:00:07','par4'),

('id8','Han',56,TIMESTAMP '1970-01-01 00:00:08','par4');

insert into t1 values

('id1','Danny1',27,TIMESTAMP '1970-01-01 00:00:01','par1');

```

1. I even tried passing it through `/etc/hudi/conf/hudi-default.conf`

```yaml

---

"hoodie.compaction.payload.class": MyHudiPoc.Poc

"hoodie.datasource.write.payload.class": MyHudiPoc.Poc

"write.payload.class": MyHudiPoc.Poc

```

I am also passing my custom jar while starting flink sql client.

```bash

/bin/sql-client.sh embedded \

-j ../jars/hudi-flink1.15-bundle-0.12.1.jar \

-j ./plugins/flink-s3-fs-hadoop-1.15.1.jar \

-j ./plugins/parquet-hive-bundle-1.8.1.jar \

-j ./plugins/flink-sql-connector-kafka-1.15.1.jar \

-j my-hudi-poc-1.0-SNAPSHOT.jar \

shell

```

1. I am able to pass my custom class in spark example but not in flink.

1. Tried with both COW and MOR type of tables.

Any idea what I am doing wrong?

See listing in the question.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscribe@hudi.apache.org.apache.org

For queries about this service, please contact Infrastructure at:

users@infra.apache.org

[GitHub] [hudi] complone commented on issue #7100: [SUPPORT] Custom HoodieRecordPayload for use in flink sql

Posted by GitBox <gi...@apache.org>.

complone commented on issue #7100:

URL: https://github.com/apache/hudi/issues/7100#issuecomment-1298209216

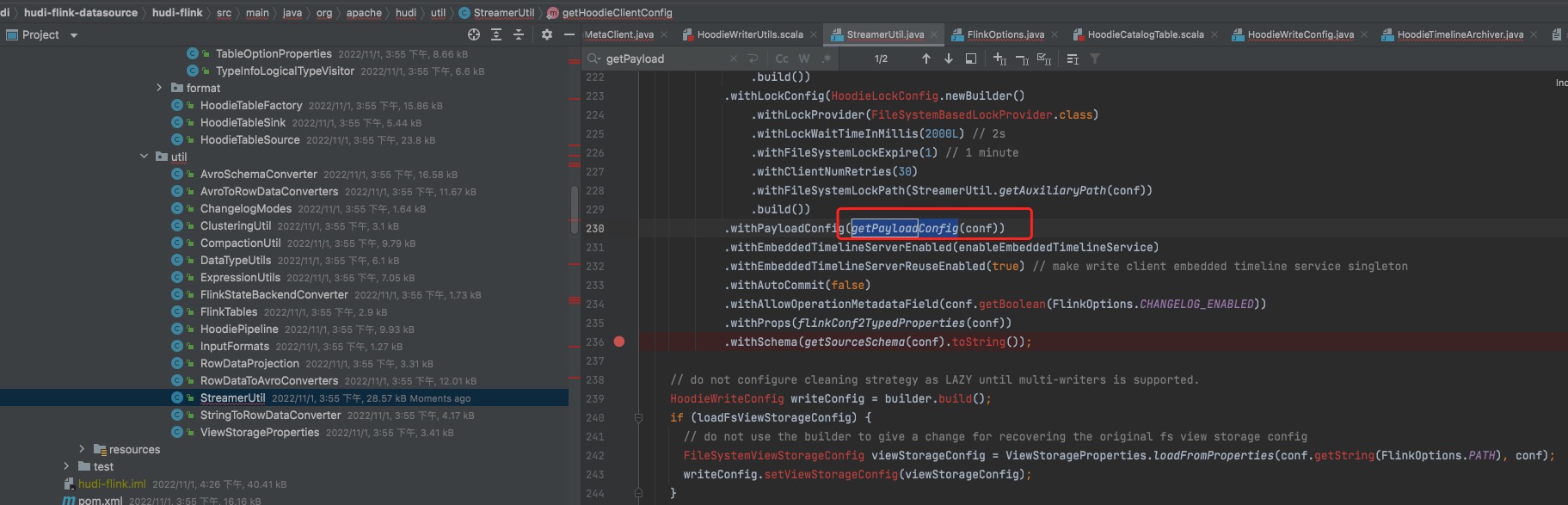

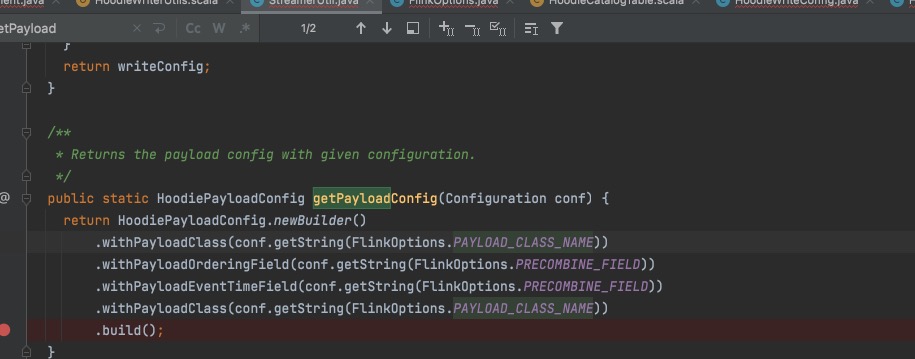

Hello, for the configuration items you use hoodie.compaction.payload.class only map related configuration items to spark configuration in class HoodieWriterUtils.

If you expect to use payloadClass, I think you should use write.payload.class, whose configuration items are defined in StreamerUtil#getPayloadConfig

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscribe@hudi.apache.org

For queries about this service, please contact Infrastructure at:

users@infra.apache.org

[GitHub] [hudi] nsivabalan commented on issue #7100: [SUPPORT] Custom HoodieRecordPayload for use in flink sql

Posted by GitBox <gi...@apache.org>.

nsivabalan commented on issue #7100:

URL: https://github.com/apache/hudi/issues/7100#issuecomment-1299564461

@yuzhaojing : can you assist here please.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscribe@hudi.apache.org

For queries about this service, please contact Infrastructure at:

users@infra.apache.org

[GitHub] [hudi] complone commented on issue #7100: [SUPPORT] Custom HoodieRecordPayload for use in flink sql

Posted by GitBox <gi...@apache.org>.

complone commented on issue #7100:

URL: https://github.com/apache/hudi/issues/7100#issuecomment-1298209281

At the same time I found that there is duplicate code here, I will open a PR to deal with it

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscribe@hudi.apache.org

For queries about this service, please contact Infrastructure at:

users@infra.apache.org

[GitHub] [hudi] complone commented on issue #7100: [SUPPORT] Custom HoodieRecordPayload for use in flink sql

Posted by GitBox <gi...@apache.org>.

complone commented on issue #7100:

URL: https://github.com/apache/hudi/issues/7100#issuecomment-1298242721

Maybe you should use ```payload.class```?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscribe@hudi.apache.org

For queries about this service, please contact Infrastructure at:

users@infra.apache.org

[GitHub] [hudi] danny0405 commented on issue #7100: [SUPPORT] Custom HoodieRecordPayload for use in flink sql

Posted by GitBox <gi...@apache.org>.

danny0405 commented on issue #7100:

URL: https://github.com/apache/hudi/issues/7100#issuecomment-1306522726

Did you try to use `payload.class` instead of `write.payload.class` then, we have changed the option key recent days.

`write.payload.class` is changed as fallback option key but it only works in Flink 1.15.x.

Feel free to re-open it if you still have problem here.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscribe@hudi.apache.org

For queries about this service, please contact Infrastructure at:

users@infra.apache.org

[GitHub] [hudi] lucienoz commented on issue #7100: [SUPPORT] Custom HoodieRecordPayload for use in flink sql

Posted by "lucienoz (via GitHub)" <gi...@apache.org>.

lucienoz commented on issue #7100:

URL: https://github.com/apache/hudi/issues/7100#issuecomment-1661941802

spark sql cow table how to set payload.class ?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscribe@hudi.apache.org

For queries about this service, please contact Infrastructure at:

users@infra.apache.org

[GitHub] [hudi] yabha-isomap commented on issue #7100: [SUPPORT] Custom HoodieRecordPayload for use in flink sql

Posted by GitBox <gi...@apache.org>.

yabha-isomap commented on issue #7100:

URL: https://github.com/apache/hudi/issues/7100#issuecomment-1298495326

Thanks @complone . Tried with that also, but no luck.

```sql

CREATE TABLE t1(

uuid VARCHAR(20) PRIMARY KEY NOT ENFORCED,

name VARCHAR(10),

age INT,

ts TIMESTAMP(3),

`partition` VARCHAR(20)

)

PARTITIONED BY (`partition`)

WITH (

'connector' = 'hudi',

'path' = '/tmp/hudi',

'hoodie.compaction.payload.class' = 'gsHudiPoc.Poc', -- My custom class

'write.payload.class' = 'gsHudiPoc.Poc', -- My custom class

'payload.class' = 'gsHudiPoc.Poc', -- My custom class

'hoodie.datasource.write.payload.class' = 'gsHudiPoc.Poc', -- My custom class

'table.type' = 'COPY_ON_WRITE'

);

```

Let me try looking into the code of FlinkOptions.java

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscribe@hudi.apache.org

For queries about this service, please contact Infrastructure at:

users@infra.apache.org

[GitHub] [hudi] danny0405 closed issue #7100: [SUPPORT] Custom HoodieRecordPayload for use in flink sql

Posted by GitBox <gi...@apache.org>.

danny0405 closed issue #7100: [SUPPORT] Custom HoodieRecordPayload for use in flink sql

URL: https://github.com/apache/hudi/issues/7100

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscribe@hudi.apache.org

For queries about this service, please contact Infrastructure at:

users@infra.apache.org

[GitHub] [hudi] yabha-isomap commented on issue #7100: [SUPPORT] Custom HoodieRecordPayload for use in flink sql

Posted by GitBox <gi...@apache.org>.

yabha-isomap commented on issue #7100:

URL: https://github.com/apache/hudi/issues/7100#issuecomment-1337013456

Thanks. I was able to get it to work with DataStream API.

One tip for anyone facing this issue, put debugging message in the constructor of the class (and not in any method) to verify if your class is getting picked or not.

In my case, class was getting picked but method was not getting called because of some code issue.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscribe@hudi.apache.org

For queries about this service, please contact Infrastructure at:

users@infra.apache.org